Several middle and high schools in China have been utilizing advanced artificial intelligence (AI) and machine learning systems to evaluate the emotions, thoughts, and performance of students in class.

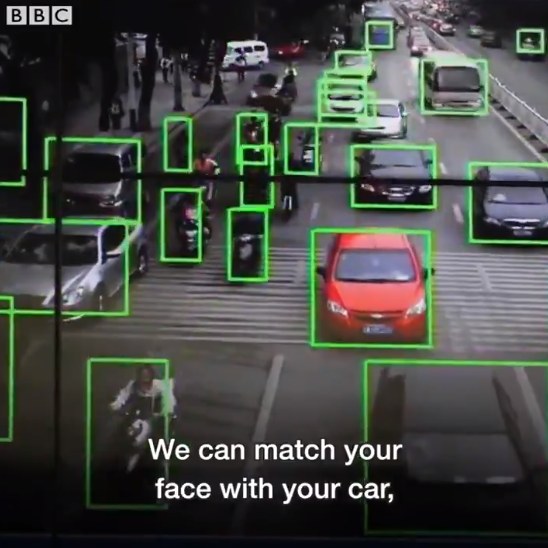

The highly sophisticated surveillance system implemented by the Chinese government was introduced in late 2017, according to the BBC. As seen in the short documentary below, Chinese surveillance systems are able to autonomously track every individual in any major region in the country with a complete evaluation of the individual’s emotions, personal record, immigration status, criminal past, and more.

“We can match every face with an ID card and trace all your movements back one week in time. We can match your face with your car, match you with your relatives and people you’re in touch with. With enough cameras, we can know who you frequently meet,” said Yin Jun, an executive at Dahua Technology, a firm that has sold more than million facial recognition cameras in China alone.

Chinese Schools Using the Same System

On June 30, the Los Angeles Times reported that Chinese schools have started to implement the same system utilized by the Chinese government to monitor streets, roads, and highways in major regions, to generate complete reports of a student’s performance at school, emotional stability, personal connections, and overall quality within the institutions.

An interesting and also frightening aspect of the CCTV cameras utilized by both the government and commercial institutions in China is their ability to understand patterns and generate enhanced reports through AI and machine learning. If a CCTV camera observes the same pattern on a regular basis, it evaluates the pattern to create suggestions about a certain individual or a group of individuals.

The detection systems of the CCTV cameras used by Chinese schools and authorities can easily catch slight alterations in emotions and mood, and are able to spot students that seem particularly moody or disengaged. At some Chinese schools that utilize this AI surveillance program, teachers are encouraged to converse with students that experience emotional volatility.

Clare Garvie, an associate at the Center on Privacy and Technology at Georgetown University Law Center, said that it is dangerous to take away the ability of students, especially young teenagers, to control their moods and get over negative emotions.

If teachers are required to reach out and solve the personal problems of students who may not be comfortable sharing the experiences or events that had triggered their moods to change, it will not be possible for them to develop emotional independence and maturity.

“It’s an incredibly dangerous precedent to affix somebody’s behavior or certain actions based on emotions or characteristics presented in their face,” Garvie told the LA Times, and added that even if the AI surveillance system can assist teachers, it should not be used to punish or categorize students. “You shouldn’t use it to punish students or put a simple label on them.”

Zhu Juntao, a 17-year-old high school student, emphasized that teenagers must learn how to control their emotions, and that constant interference from teachers may play a negative role in the maturation of young adults.

Elon Musk’s Warning

Musk, the founder and CEO at Tesla and SpaceX, two of the most successful technology companies in the modern era, said that AI is far more dangerous than nukes, especially if it is utilized wrongly.

“The biggest issue I see with so-called AI experts is that they think they know more than they do, and they think they are smarter than they actually are. This tends to plague smart people. They define themselves by their intelligence and they don’t like the idea that a machine could be way smarter than them, so they discount the idea — which is fundamentally flawed,” Musk said.

Image(s): Shutterstock.com